In a groundbreaking update released quietly this week, Google’s Bard chatbot has taken a monumental leap forward, propelling it miles ahead of its competitors. The secret behind this revolutionary transformation lies in a single feature that has flown largely under the radar.

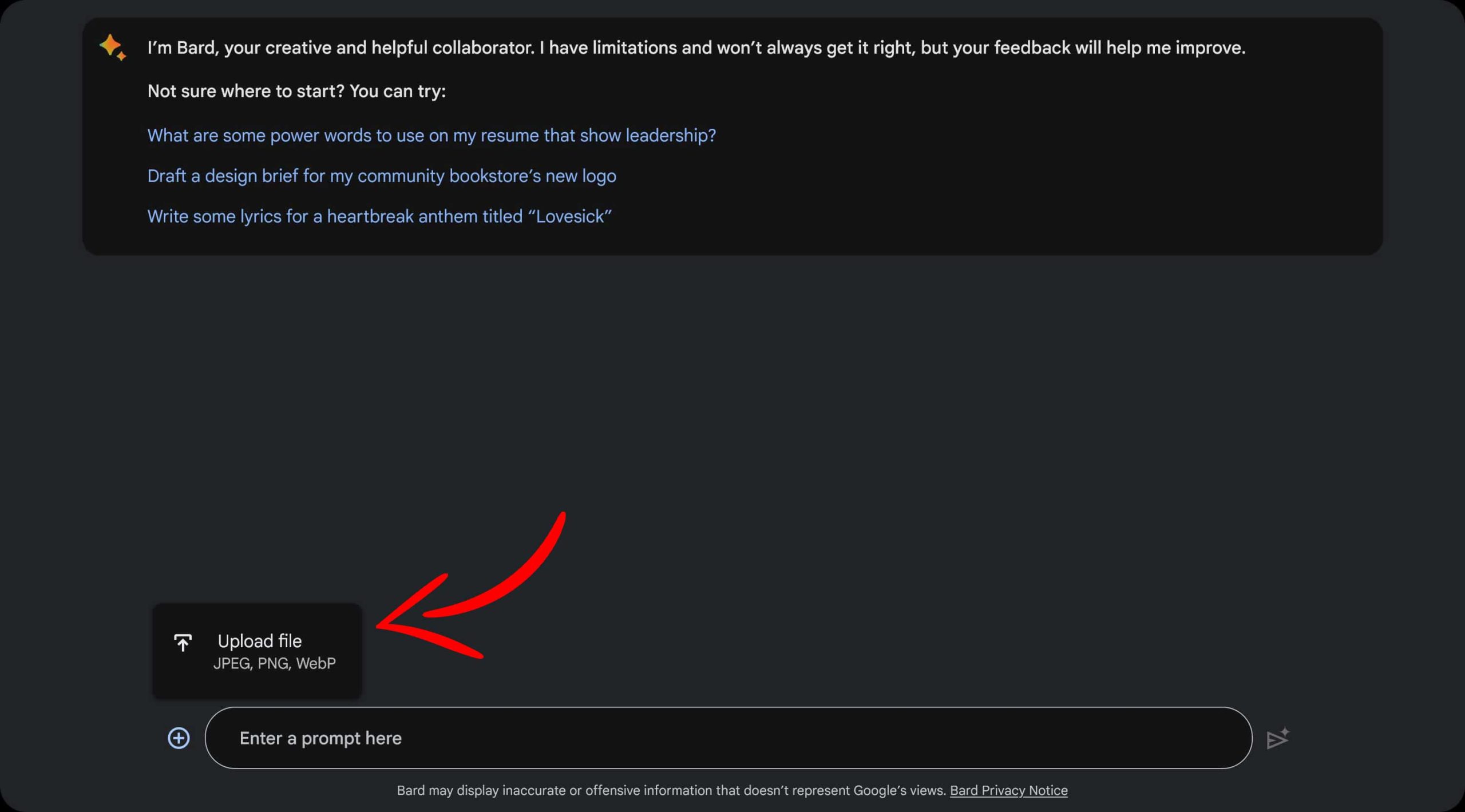

Bard can now accept images as prompts in addition to text, making it officially multimodal.

The Chatbot That Can Take Images as Prompt

This remarkable integration of image recognition with its natural language processing capabilities has unlocked a world of possibilities for Bard users. With the ability to accept both visual and textual input, the chatbot has become a true powerhouse, leaving its competitors trailing in its wake.

The implications of this multimodal capability are far-reaching. Bard can now analyze images and process their contents alongside written prompts. It leverages advanced machine learning algorithms to understand the context and meaning of these visual cues, leading to more accurate and contextually relevant responses.

But How Accurate Can It Be?

Early testers of the new Bard have been astounded by its performance. Users have reported that the chatbot can accurately identify objects, interpret complex scenes, and even discern emotions from images. This newfound ability allows for more dynamic and engaging conversations, making interactions with Bard feel increasingly human-like.

The incorporation of images as prompts also adds an exciting layer of versatility to the chatbot. Users can now present Bard with a picture and ask questions or seek information based on what they see. This feature proves especially useful in scenarios where describing something in words might be challenging or time-consuming.

I Tested Bard With a Messy Image

Out of excitement for this new feature, I took a picture of my messy desk and asked Bard to identify the objects. It did well but not excellent yet, however, in other kinds of tasks it might output better results.

Initially, this is the image with which I prompted it and asked to identify all objects:

I know, it is not a beautiful image, but that’s the point after all. Let’s see how well it did, here are the answers from Bard:

Prompt: Can you list the objects you can identify in this image?

Sure, here are the objects I can identify in the image:

- Wooden table

- Cell phone

- Wallet

- Sunglasses

- Floss

- Magazine

- Bluetooth speaker

- Text: 1600 ilul 0304-01052

What it is missing are the following:

- Disposable Batteries

- Access Card with Holder

- Glasses (the optic ones)

- The earphones

- The glasses cleaning clothes

Not too bad but not excellent as I said earlier. Maybe this is just the start for Bard image processing and it will improve. I have seen people online taking images of their fridges and asking Bard to make receipts with what they have.

Enhancing Real-World Applications with Multimodal AI

The impact of Bard’s multimodal capability extends beyond casual conversations. This powerful update opens the doors to a wide range of real-world applications, pushing the boundaries of what AI-driven chatbots can achieve.

In customer support, for instance, users can now upload images of issues they are facing, allowing Bard to better understand and address their concerns. The ability to receive visual cues streamlines the troubleshooting process and improves overall customer satisfaction.

Bard and Education – A Perfect Match

Educational institutions can harness the power of Bard’s image recognition to revolutionize the learning experience. Students can present visual prompts to Bard, making subjects like art, history, and biology come alive with interactive discussions.

This innovative approach fosters deeper understanding and engagement among students.

Pioneering the Future of Conversational AI

Google’s Bard has firmly established itself as a pioneer in the realm of conversational AI. By integrating multimodal capabilities, it has surpassed traditional text-based chatbots, bringing a new level of sophistication to human-computer interactions.

The potential for further advancements is immense. As Bard continues to learn and adapt from the vast array of multimodal data, its responses will only become more insightful and personalized. Google’s dedication to refining Bard’s AI algorithms ensures that the chatbot will remain at the forefront of the industry.

The Multimodal Revolution Begins

With the introduction of image acceptance, Bard has transformed into a truly multimodal conversational AI powerhouse. Its newfound ability to process visual prompts alongside textual queries sets it apart from its competitors, propelling it miles ahead in the race for AI supremacy.

As users embrace this game-changing feature, Bard’s capabilities will undoubtedly continue to grow, reshaping the landscape of conversational AI and redefining human-computer interactions for years to come. The multimodal revolution has begun, and Google’s Bard is leading the way into an exciting and transformative future.

Conclusion

The introduction of Google Bard’s multimodal capability, allowing the chatbot to accept images as prompts, breaks down language barriers and democratizes AI access on a global scale.

Images transcend linguistic boundaries, enabling people from diverse linguistic backgrounds to interact seamlessly with the AI-powered chatbot. This inclusivity fosters a more inclusive and accessible AI experience for users around the world.

Moreover, the incorporation of images as prompts simplifies communication, making it easier for casual users to engage with Bard. Users no longer need to rely solely on text-based input, which can sometimes be challenging or time-consuming.

Instead, they can effortlessly convey their queries or share information using images, creating a more intuitive and user-friendly interaction with the chatbot.

This amalgamation of image recognition and natural language processing in Bard signifies a significant step forward in AI accessibility and usability, proving that technology can indeed bridge gaps and bring people together, regardless of linguistic or technical barriers.